|

Back to Blog

Microsoft azure speech to text api6/11/2023

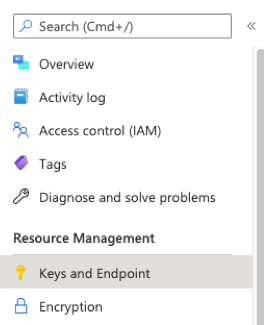

Remove data silos and deliver business insights from massive datasetsĪccess cloud compute capacity and scale on demand-and only pay for the resources you use Secure, develop, and operate infrastructure, apps, and Azure services anywhere Jump in and explore a diverse selection of today's quantum hardware, software, and solutions Quickly create powerful cloud apps for web and mobileĮverything you need to build and operate a live game on one platformĮxecute event-driven serverless code functions with an end-to-end development experience Migrate, modernize, and innovate on the modern SQL family of cloud databasesīuild or modernize scalable, high-performance appsĭeploy and scale containers on managed KubernetesĪdd cognitive capabilities to apps with APIs and AI services Provision Windows and Linux VMs in secondsĮnable a secure, remote desktop experience from anywhere In addition, we'll set the immediate property of the watch to true so that it will call the function immediately when the watch is initially created.Explore some of the most popular Azure products We do this by creating a watch on the language code that will call the function whenever the user updates its value. Lastly, we need to modify our Vue app to call the selectLanguage function when our component is created. This ensures that any existing subscriptions are deleted. We'll output a SignalR group action for each language that our application supports - setting an action of add for the language we have chosen to subscribe to, and remove for all the remaining languages. The function is invoked with a languageCode and a userId in the body. fromLanguage // add one or more languages to translate to for ( const lang of options. region ) // configure the language to listen for (e.g., 'en-US') speechConfig. fromDefaultMicrophoneInput () // use the key and region created for the Speech Services account const speechConfig = SpeechTranslationConfig. listen to the device's microphone const audioConfig = AudioConfig. You can create a free account (up to 5 hours of speech-to-text and translation per month) and view its keys by running the following Azure CLI commands:

Most of the heavy-lifting required to listen to the microphone from the browser and call Cognitive Speech Services to retrieve transcriptions and translations in real-time is done by the service's JavaScript SDK. An Azure Function app providing serverless HTTP APIs that the user interface will call to broadcast translated captions to connected devices using Azure SignalR Service.

It uses the Microsoft Azure Cognitive Services Speech SDK to listen to the device's microphone and perform real-time speech-to-text and translations. A Vue.js app that is our main interface.Best of all, these services all have generous free tiers so we can get started without paying for anything! Overview And because we are using serverless and fully managed services, it can scale to support thousands of audience members. It will transcribe and translate speech using the browser's microphone and broadcast the results to other browsers in real-time. In this article, we'll look at how (with not too many lines of code) we can build a similar app that runs in the browser.

Microsoft created Presentation Translator to solve this problem in PowerPoint by sending real-time translated captions to audience members' devices. When we do a live presentation - whether online or in person - there are often folks in the audience who are not comfortable with the language we're speaking or they have difficulty hearing us.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed